Public Technical Note · April 2026

Auctor

Governed AI memory & auditable release.

Angad Bank

Basel, Switzerland · April 2026

Large language models are powerful interfaces for language, reasoning, summarisation and planning. They are not, by themselves, reliable systems of record. In regulated environments the operative question is not whether a model can produce a plausible answer; it is whether the organisation can prove which answer is allowed to be used. Which source it came from, whether the source is current, whether the requester is authorised, whether restricted content was redacted, whether unsupported claims were refused rather than guessed, and whether the entire decision path can be reconstructed from an audit trail.

Auctor is a governed AI memory and release framework for that gap. It treats every fact-bearing output as a transaction that must satisfy an explicit governance contract before it leaves the system. The framework guarantees source binding, policy compliance, verification, immediate updateability without retraining, and reconstructable audit. Five operational properties an organisation can demand and inspect from the outside.

This public technical note is intentionally narrow. It describes the claim Auctor makes, the contract it enforces, and the evidence gathered under that contract. It does not describe the implementation, the underlying research, or the architecture by which the contract is satisfied. Those remain licensed, available to qualified partners under licence, and outside the public record by design.

Fluency is not authority.

A modern language model can summarise a document well without knowing whether the document is superseded. It can retrieve a relevant source without knowing whether the requester is permitted to see it. It can cite something without that citation being the correct authority. It can answer with confidence without that answer being auditable. None of those failure modes are visible at the surface, which is why they are dangerous.

That is the gap between an answer that sounds right and an answer that is defensible. In banks, insurers, public agencies, healthcare and pharma, defensible is the only acceptable shape.

What is missing is not another chatbot, not a longer context window, and not better retrieval. What is missing is governance. An explicit decision about what the system is allowed to know, cite, release, refuse, redact, update, revoke, and defend.

Every fact-bearing output passes a contract.

Auctor commits the deployment to a contract. A fact-bearing release must be source-bound, policy-compliant, verified, and recorded, or it must not leave the system. There is no path by which a plausible answer reaches the user without an attached source decision, and no path by which an unverified claim is presented as institutional fact.

When the checks do not hold, the system does not guess. It corrects, redacts, refuses, or abstains. In each case on the record, with a reason an auditor can inspect afterwards.

The model remains the interface. The contract becomes the authority. Institutional knowledge can change at institutional speed, without retraining the underlying model and without taking the answer surface offline.

1.00

Release accuracy under the contract

120M+

Real public catalogue rows under exact source binding

0

False authoritative answers, false abstentions

5

Operational guarantees the contract enforces

Exact source binding at real-data scale

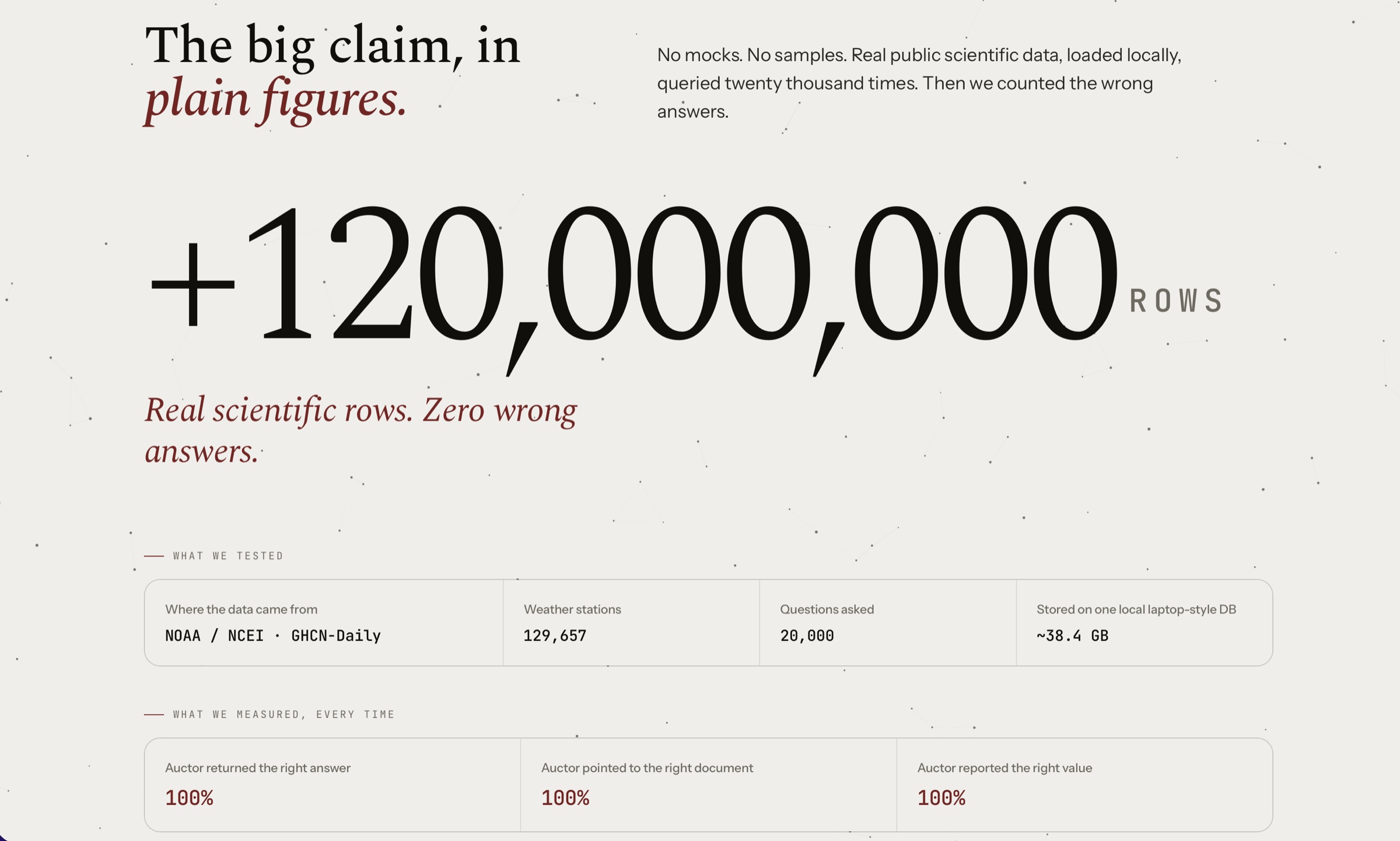

Field, retrieval, source, and value accuracy 1.00 over a real public scientific catalogue with more than 120 million rows, evaluated with tens of thousands of probes against the typed source-selection contract.

Verified multi-step natural-language workflow

A normal-language request drives source selection, calculation, intermediate notes, a rendered report, and verification end-to-end. Workflow and report-verification rate 1.00 in the local gate.

Diverse enterprise document surfaces

PDF text, OCR text, email, contracts, tables, tickets, chats, and logs all bound to source-bound records or rejected. Exact canonical, source-binding, and rejection accuracy 1.00. No raw document text in the result artifact.

Production-style runtime contract

IAM/RBAC/ABAC, tenant envelope encryption, key rotation, durable queues, observability, tamper-evident audit, legal holds, deletion-SLA, and backup expiry, all in one harness. Zero false allows or denies, full encrypted-payload rate, audit chain validated.

The contract corrects an imperfect model

Across more than one local model family, the raw model output below the contract is deliberately imperfect. Governed release still reaches 1.00 on held-out field decode and open-ended reasoning, with zero false authoritative answers and zero false abstentions.

Retrieval alone does not solve correctness

A heavily engineered metadata-filtered retrieval system matches Auctor only by rebuilding the same typed source-selection contract internally. Retrieval-only baselines fail the regulated exact-source task or are orders of magnitude too slow.

This note discloses what Auctor's contract guarantees and what was measured against it. It does not disclose the underlying research, the internal architecture, or the implementation by which the contract is satisfied. Those remain licensed and outside the public record. Pilots, licensing, and qualified-partner briefings begin at impact@angad.swiss.

A bounded claim, deliberately

These are bounded transaction pass rates under explicit contracts in local evaluation gates. They are not claims of universal model truth, of perfect open-world reasoning, or of external cloud certification. The next validation layer is independent replication, customer-environment pilots against managed IAM, KMS, queues, observability, backup and SIEM, adversarial red-team testing, and governance review by the deploying organisation.

Download

Auctor: Governed AI Memory & Auditable Release

Public technical note · April 2026 · free

Download PDFInterested in a pilot or licensing?

For licensed technical briefings, customer-environment validation, or qualified-partner access, reach out directly.

Book Intro Call